Could higher-temperature liquid cooling change more than just thermal design? Fred Miller, Creighton Couch, PE, and Patrick Sweeney, PE, of Salas O’Brien believe it could also reshape the economics, scalability, and resilience of next-generation AI data centres.

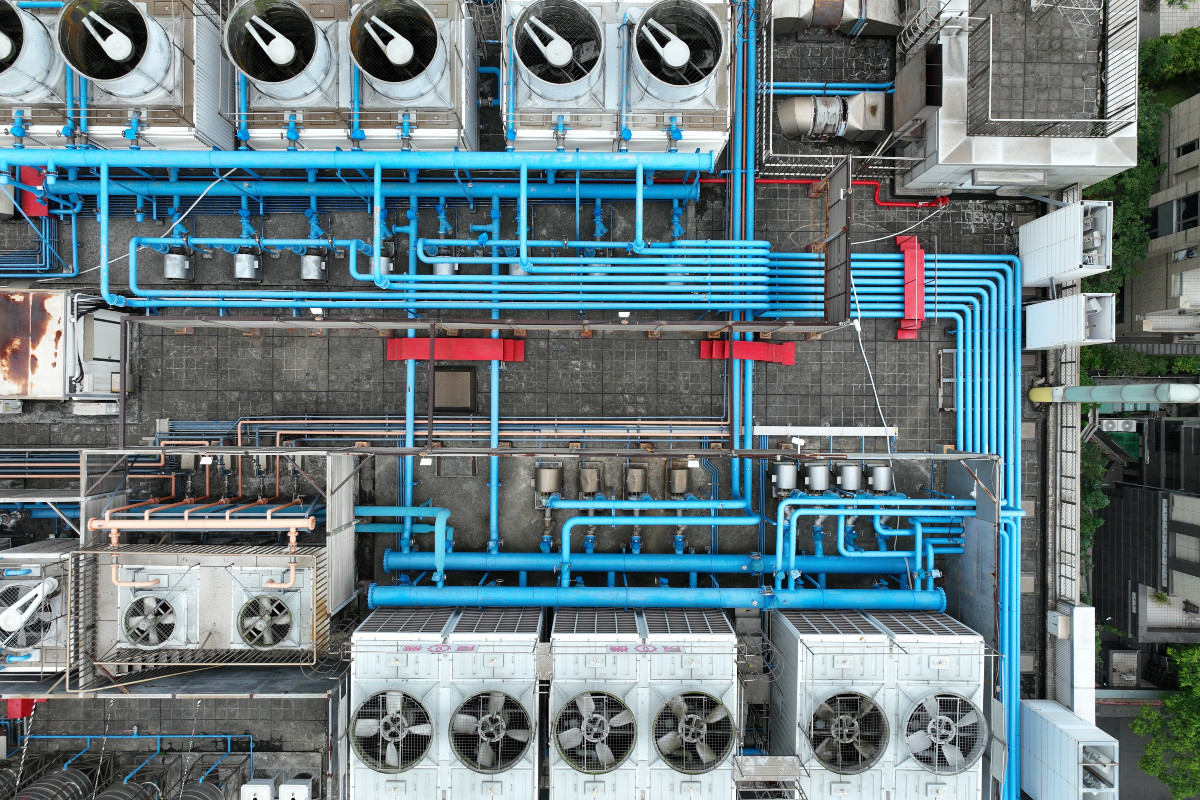

The rapid growth of AI and high-performance computing is fundamentally changing data centre cooling design. Rack densities that once stretched the limits at 150 kW are now the baseline, with many new deployments exceeding 200 kW per rack and continuing to increase. At these power levels, cooling is no longer a background utility; it is a primary driver of energy consumption, infrastructure sizing and total cost of ownership.

From an engineering standpoint, this shift highlights the limits of traditional low-temperature chilled-water systems. Compressor-heavy designs built around narrow supply temperatures demand significant electrical input, limit economisation opportunities and do not scale efficiently as sites expand from single buildings to multi-hundred-megawatt campuses. Efficiency losses that were once manageable now create substantial operating costs in AI-dense environments running continuously at near-peak loads.

For owners and developers, these technical limits directly affect financial outcomes. Cooling energy is now a significant factor in utility demand, power supply allocation for IT loads and long-term operational costs. Consequently, cooling strategies now span mechanical design, power planning and capital investment. In this evolving context, cooling choices are driven not just by thermal needs, but also by economic optimisation, risk management and support for scalable growth.

What’s changed: silicon roadmaps align with warmer coolant

Recent market developments are crystallising what was once a theoretical shift in liquid cooling into a practical industry inflection point, particularly in relation to how new-generation GPUs can be cooled at higher temperatures to improve system-level efficiency and reduce infrastructure overhead.

At CES 2026, NVIDIA unveiled its next-generation Rubin platform, building on the liquid-cooled Blackwell architecture and designed to operate with warm-water supply loops around 45°C. This goes beyond simply raising silicon’s temperature tolerance and could change how data centre cooling is architected.

Rubin’s warm-water operation enables direct liquid cooling to capture heat far more efficiently than air, potentially reducing reliance on traditional chillers and lowering fan and compressor energy requirements. This can translate into energy and cost savings at the system level, particularly when economies of scale are considered across racks and halls.

NVIDIA’s support for higher-temperature liquid cooling may also reduce perceived technical and commercial risk for operators. OEM partners such as Supermicro are expanding manufacturing capacity and offering liquid-cooled, rack-scale solutions optimised for these platforms, signalling broader ecosystem confidence in warmer coolant classes. This wider industry support may help address concerns from owners, insurers and lenders about whether these cooling methods will be reliable and properly supported over time.

For owners and operators, the implications are both practical and financial. Silicon roadmaps are aligning with liquid-cooling architectures that support warmer supply loops and chiller-less heat rejection. This creates opportunities for facilities to shift from capital-intensive mechanical cooling plants towards simpler, more scalable thermal design approaches. It can also reduce the operational energy footprint while lowering the complexity and cost of commissioning and maintaining large-scale cooling systems, particularly in high-density AI deployments.

From equipment cooling to thermal management

The shift to higher-temperature liquid cooling creates a new paradigm, as the objective moves from cooling equipment to managing heat as efficiently as possible. By operating with warmer supply loops, facilities can reduce or even eliminate mechanical chilling, expand the hours of dry or economised heat rejection, and materially lower parasitic electrical load per megawatt of IT.

For owners, this means reduced operating expense volatility tied to compressor energy, improved PUE at scale, and more stable performance under extreme ambient conditions. Thermal strategy becomes both a design choice and a lever for financial and operational resilience.

Multi-tier architectures in practice

In practice, next-generation liquid cooling extends well beyond a single direct loop serving all equipment. Designs use multi-tier thermal architectures, layering cooling loops to match the diverse thermal characteristics of modern compute loads. For example, a design may use a primary high-temperature liquid loop, engineered for a supply temperature of 40°C, to efficiently collect heat from all liquid-cooled racks.

From there, secondary and tertiary loops are introduced at progressively lower temperature setpoints to support equipment that is sensitive to tighter thermal tolerances. This hierarchical approach enables a single campus to support mixed rack populations exceeding 200 kW per rack without sacrificing thermal performance or energy efficiency.

Advanced controls are crucial for these systems, adjusting supply temperatures and flow routing dynamically based on real-time workload and rack density. They allow high-heat areas to operate using the warm loop whenever possible while supplying cooler fluid to more sensitive equipment as needed. New commercial options from liquid-cooling OEMs support this move towards temperature-aware distribution, capable of managing hundreds of kilowatts per rack and higher inlet temperatures, which helps lower parasitic loads and improve overall system efficiency.

Operational and economic benefits

The practical advantages of multi-tier thermal architectures become clear in operation. By aligning coolant temperature with each rack’s specific demands, facilities can avoid unnecessary overcooling. This reduces energy use by pumps and fans and allows heat rejection systems to operate closer to their optimal setpoints.

These efficiency improvements occur automatically through controls, without requiring operator adjustment. Ultimately, the cooling system responds in proportion to workload levels rather than operating on worst-case assumptions.

For owners, this can improve operation and reliability in several ways:

- Preserves efficiency without sacrificing flexibility. High-density AI racks can coexist with lower-density equipment without forcing the entire plant into a low-temperature operating regime.

- Creates a pathway for phased density increases without requiring wholesale plant redesign. As GPU platforms evolve and rack power climbs, thermal infrastructure can adapt incrementally rather than being replaced. In this sense, thermal strategy also supports future capacity growth.

- Introduces thermal diversity and enhances reliability. Multiple temperature loops create operational options during maintenance events, extreme ambient conditions or component failures. Loads can be shifted between loops, temperature bands can be adjusted dynamically, and portions of the plant can be serviced without forcing the entire facility into a constrained operating mode.

The economic benefits may also be substantial. In some recent high-density deployments, warmer primary loops and right-sized secondary systems have reduced required chiller tonnage, lowered the electrical infrastructure dedicated to cooling, and decreased projected lifetime energy costs per megawatt of IT load. Equally important, scalable thermal design can enable density growth without proportional increases in mechanical plant size. This helps protect capital deployment while preserving long-term campus flexibility.

For a balanced perspective, it is important to consider the added design sophistication. Multi-tier liquid cooling systems are more complex to engineer than single-loop or traditional chilled-water plants. They require integrated modelling, careful hydraulic design and advanced control strategies. However, this complexity is typically addressed during engineering and commissioning rather than in day-to-day operations. In practice, operators may experience fewer emergency cooling events, more predictable performance envelopes and reduced manual intervention because the system is designed to adapt automatically.

The new thermal economics

As AI infrastructure grows, cooling must be assessed beyond just peak tonnage or initial cost. The new economics favour designs that lower energy use, reduce risk through diversity, and enable long-term density growth. For owners and developers, the key question is no longer whether liquid cooling is necessary, but how to incorporate an intelligent thermal approach into the financial and operational plans of the campus.