Teresa Monteiro, Director of Marketing at Nokia, explains why AI’s scale-out demands are pushing optics closer to the heart of the data centre.

Artificial intelligence is rewriting the design rules for data centres (DCs). Training and inference demand large clusters of GPUs working in concert, all of which need to be interconnected within power- and space-constrained premises.

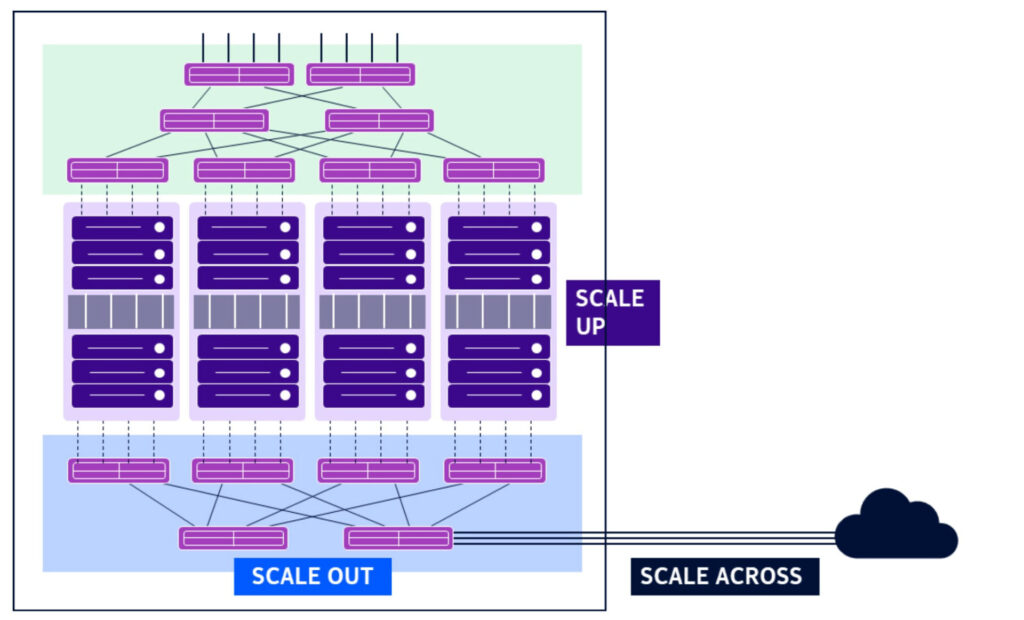

Traditionally, data centre scaling meant adding larger processors or increasing the number of cores in a rack – an approach known as scale-up. AI has fundamentally changed this pattern.

While GPU performance has improved rapidly, it has not kept pace with the growth of AI models and workloads. As a result, DC operators need to connect hundreds of thousands of GPUs across racks and buildings, known as scale-out, and in some cases between geographical locations, known as scale-across, to form a single, unified computing system.

Intra-DC networks – the back-end links within a data centre connecting GPUs to support workload distribution – must transfer terabits per second per port, with latency measured in nanoseconds to microseconds, and do so efficiently at scale. That is driving optics closer to the heart of the data centre.

Industry analysts, such as LightCounting, project that sales of Ethernet optical transceivers and co-packaged optics will double over the next five years, with intra-DC applications accounting for the overwhelming share of that growth.

From copper to optics

Copper has long been the workhorse for very short-reach intra-DC connectivity. This inexpensive material has high conductivity, good malleability, and thermal stability. The physics of copper cannot be ignored, however; as bandwidth and transmission distance increase, insertion loss, crosstalk, reflection, power consumption, and heat all rise.

Fibre, though more expensive per connection today, offers greater bandwidth with low attenuation, immunity to electromagnetic interference, and thinner, airflow-friendly cabling. The trade-off is complexity: optical interconnects require electrical-to-optical conversion, increasing costs and power consumption.

The most common intra-DC approaches use copper for distances up to 5m, typically within a rack, and optics for rack-to-rack connections and beyond. But as data centres expand, space for optical interfaces, their cost, and, above all, their power consumption are coming under greater scrutiny.

Why pluggable optics are evolving: FRO, LRO and LPO

Data centre power consumption is not merely an operating cost. The total power available to the facility is limited by the surrounding electrical grid, and every watt consumed by an optical interface is a watt unavailable to a revenue-generating GPU. Additionally, optical interfaces are installed in hosts such as switches, routers, or network interface cards (NICs), each with limited power and cooling available per port.

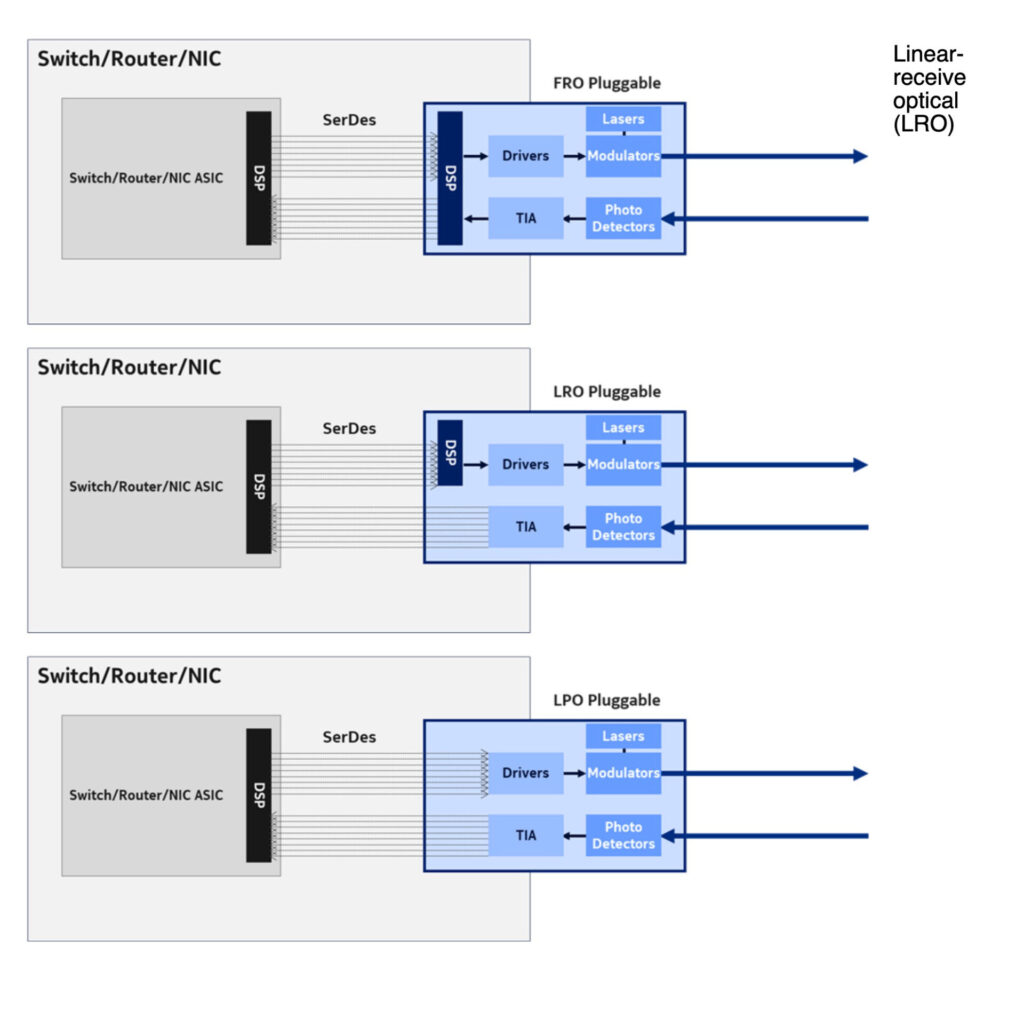

What, then, are the options in optics layout to address power consumption? Standard fully retimed optical (FRO) pluggables have dominated successive generations of optics and remain widely used for coherent devices.

FRO modules incorporate digital signal processing on both the transmit (Tx) and receive (Rx) sides, with complex algorithms for signal retiming, equalisation, and forward error correction. This is because the electrical interface between the host ASIC and an optical module consists of multiple high-speed SerDes lanes, each operating as an independent serial channel. For a host port with 1.6Tb/s capacity, eight copper SerDes lanes running at 224Gb/s per lane are aggregated in an optical interface.

At high data rates, signal loss and interference over copper become significant, even for short SerDes traces on the host printed circuit board. FRO pluggables correct for those SerDes impairments and also compensate for optical impairments along the fibre, ensuring robust signal integrity across both the electrical and optical domains. FRO optical reach is greater: retimed pluggables using a typical intra-DC intensity-modulated direct-detection (IMDD) format, such as PAM4 operating at 1.6T, have been shown to cover distances above 5km.

The trade-offs are latency and power consumption: a 1.6Tb/s FRO introduces 100–200ns of latency and consumes approximately 25W per module.

Two more power-efficient pluggable designs suited to 1.6T intra-DC optics have emerged and recently gained traction.

Linear-receive optical (LRO) pluggables keep a DSP on the Tx side but drop it on the Rx side, letting the host ASIC process the incoming signal. This takes advantage of the fact that the electrical signals in the SerDes lanes and the intra-DC optical signal use a common modulation format, IMDD PAM4, allowing the DSPs within the host ASIC to do the processing. LROs reduce pluggable power consumption by more than one third, to approximately 16W per module, and cut latency by roughly half compared with FRO.

Linear pluggable optics (LPOs) go even further, eliminating the DSP from both Tx and Rx paths. The optical module is effectively analogue, focused on optical-electric conversion, while the host ASIC’s SerDes handles all signal processing.

LPO can achieve sub-5ns module latency and reduce power consumption to less than 10W, provided the host has been specifically designed to handle signal losses and distortions. LPO architecture is particularly relevant for short-reach connections within a data centre, typically 100m to 2km, where density and energy efficiency are the main priorities.

Bringing optics closer to the ASIC: NPO and CPO

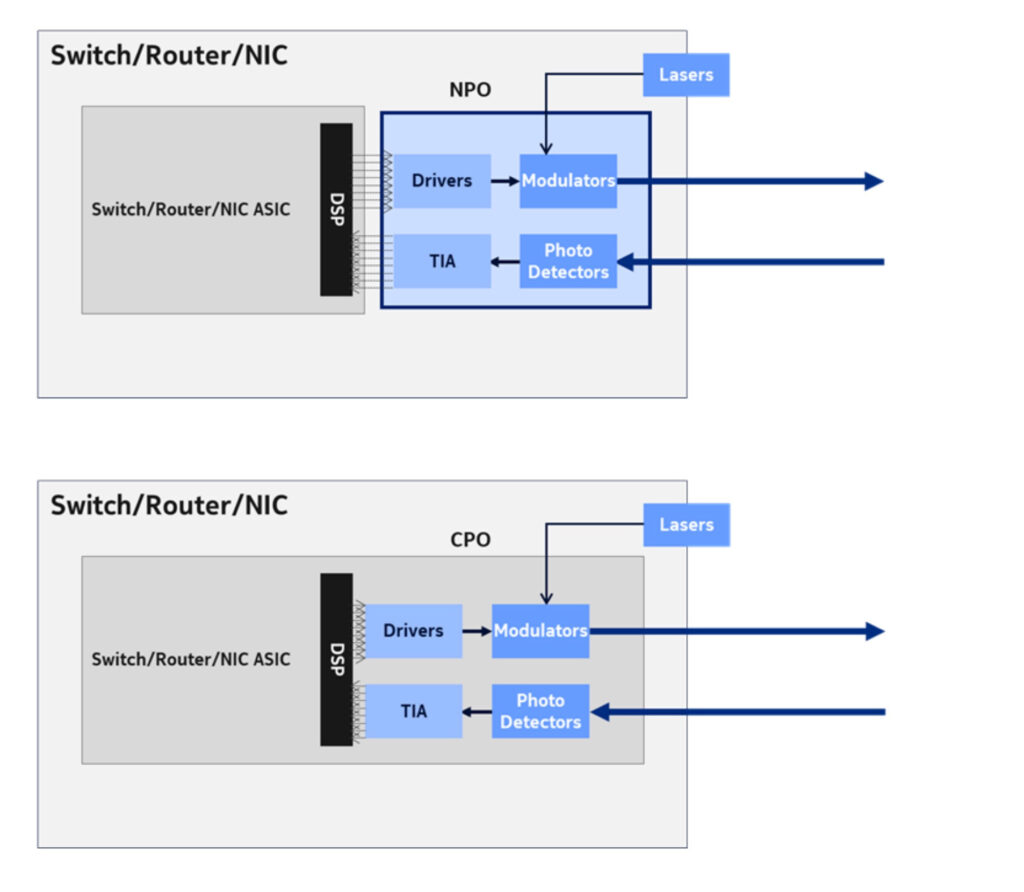

Two further architectural options for reducing optics power consumption bypass the pluggable module altogether.

Near-package optics (NPO) remove the optical engine from the pluggable module and place it directly in the host blade, close to the ASIC. This approach reduces SerDes distances and losses, improves signal integrity, and lowers power consumption. An external pluggable laser source is recommended, since lasers generate significant heat and show steep temperature dependencies, so they perform poorly inside a hot host; using an external laser also makes replacement easier in the event of failure. However, this design sacrifices the flexibility of hot-swappable optics, introducing manufacturability challenges and co-design dependencies between the host platform and the optical implementation. This is a manageable limitation for hyperscalers that build custom infrastructure, but a more significant consideration for the broader market.

Co-packaged optics (CPO) takes this approach a step further by integrating optics with the host ASIC on the same substrate and package, with only the laser remaining external. By reducing SerDes losses to just a few decibels, CPO delivers ultra-low, sub-nanosecond latency and strong power efficiency per bit. Bringing CPO solutions to market is not without challenges, however. Packaging, yield, and thermal engineering remain difficult, and industry standards bodies are working to improve interoperability.

The road ahead

As AI scales, every watt saved counts. The industry has reduced power per gigabit by two orders of magnitude in the last decade, thanks to advances in CMOS, semiconductor technology, and innovations in pluggable optics. Layout and packaging advances that minimise SerDes trace length will help sustain that progress.

But, as is often the case, there is no single best option for intra-DC optical connectivity. Instead, there is a portfolio of solutions, each optimised for a different balance of reach, power, density, latency, serviceability, and supply-chain flexibility.

Intra-DC networks are becoming an extension of compute. Advanced optics – whether FRO, LRO or LPO pluggables, near-package, co-packaged, or future architectural evolutions – are helping to enable that shift.