As DRAM prices surge and lead times stretch, Chris Carreiro, Chief Technology Officer at Park Place Technologies, explains why memory shortages are becoming a serious constraint on AI infrastructure plans.

The UK’s data centre pipeline is breaking records. London alone is on track to add 180MW of new capacity in 2026, following a record-breaking 193MW in 2025. Investment is flowing, ambition is high, and the government’s AI Opportunities Action Plan has given organisations across every sector a mandate to modernise.

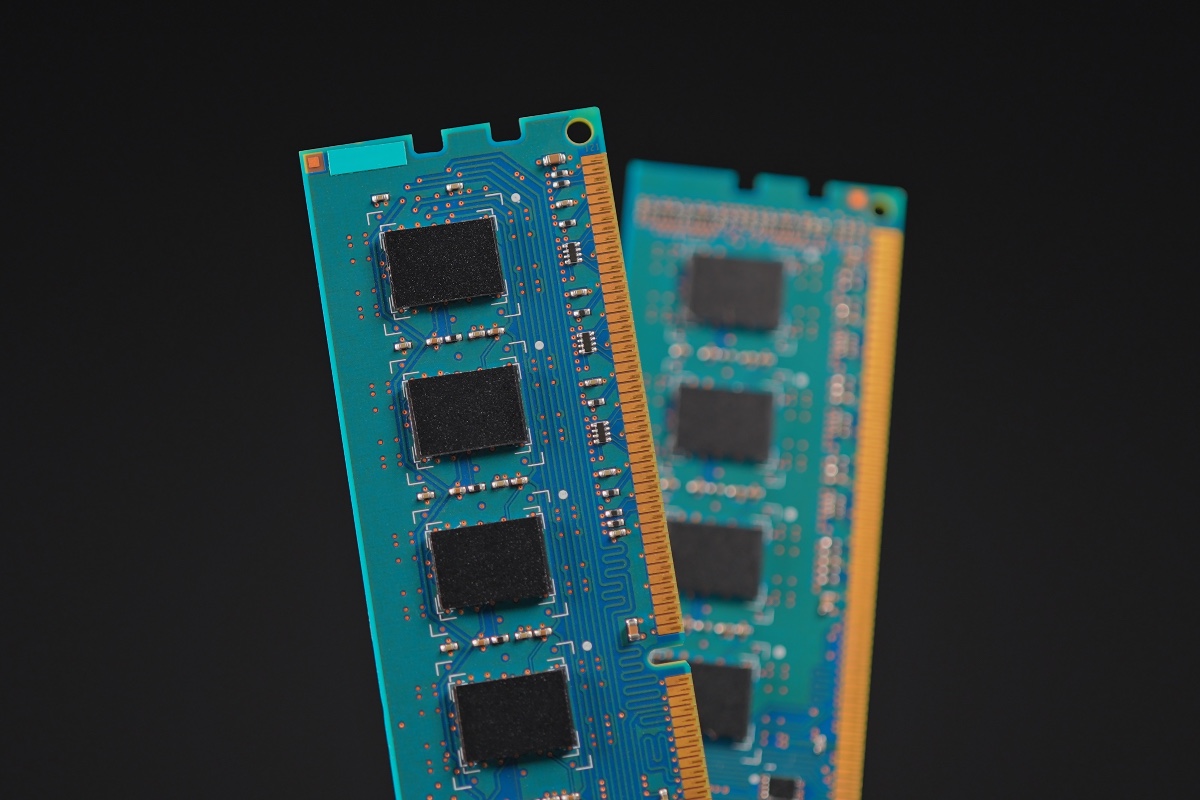

But at the very moment enterprises are racing to scale AI infrastructure, prices for Dynamic Random Access Memory (DRAM), which is the technology that powers servers and storage arrays, have surged 171% year on year. This means lead times have stretched from weeks into months, and enterprise-grade hardware has become a moving target.

This global memory shortage is cutting off the oxygen supply for projects that depend on it – and unlike previous hardware disruptions, the forces driving this one are deliberate, structural, and far harder to reverse. To understand what needs to change, we first need to understand what is driving it.

Understanding the structural reallocation

What makes this shortage distinct is that it isn’t being driven by a weather event, shipping disruption or natural disaster. This is a deliberate allocation decision by the world’s largest memory manufacturers, redirecting limited production capacity away from standard enterprise DRAM and towards the high-bandwidth memory that AI infrastructure demands.

This dynamic is what shapes the recovery timeline. Cleanrooms and semiconductor fabrication facilities are among the most complex and capital-intensive environments in modern manufacturing. They take years to build and commission. There is no quick fix. The shortage could persist for years, and the structural reality of how this memory is produced will continue to strain supply chains long after headlines move on. When it comes to pricing, there is little comfort either. At best, increases plateau and DRAM stabilises at a higher premium through 2026; further hikes and extended lead times well into next year remain the more probable outcome.

As enterprises across every sector accelerate their push to modernise, they are being forced to think about how to scale AI infrastructure at the very moment components are so hard to source.

Resetting infrastructure assumptions

For decades, IT infrastructure strategies were built on reliable foundations: stable supply chains, predictable pricing, and a hardware refresh cycle every few years. The move to AI workloads has dismantled all three assumptions at once.

The tension is direct and immediate. The AI-driven demand pushing organisations to expand their data centre footprint is simultaneously depleting the supply chains that make that expansion possible. Organisations locked into multi-year digital transformation plans – built on procurement assumptions made two or three years ago – are now finding that hardware is no longer available at the price they planned for, and in some cases, not available at all.

This is a structural reset, not a temporary disruption. The question for infrastructure leaders can no longer be when supply will normalise. It must be how to build and operate resilient infrastructure in an environment where volatility is the baseline condition.

Adapting procurement strategies

The pressure is falling disproportionately on small and medium enterprises, who are finding it increasingly difficult to reliably procure the hardware they need. Larger organisations with established vendor relationships and procurement scale have more room to absorb delays and cost increases; smaller enterprises do not have that buffer.

For those organisations, the priority now is getting smarter about what they allocate and where they deploy it. Rather than waiting for OEM supply chains to recover, forward-thinking teams are broadening how and where they source infrastructure components. This means looking beyond traditional OEM channels and rethinking sourcing strategies entirely. This approach was once considered a workaround but is increasingly recognised as sound strategic practice.

The organisations navigating this most effectively are those that have built flexibility into how they source, not just what they source. And they are finding themselves much better placed to keep projects moving than those that have not. Concentration in any supply chain introduces risk. In a constrained market, that principle applies to hardware procurement as much as anywhere else.

Designing for persistent uncertainty

Beyond procurement, extending hardware lifecycles and maximising the efficiency of existing assets remain one of the most consistently underused responses to a constrained market.

When supported by robust maintenance and predictive monitoring, extending asset lifecycles can significantly reduce refresh frequency and exposure to volatile procurement windows. Organisations that have structured their operations around just-in-time component availability are now bearing the cost of that assumption. Maintaining even modest reserves of critical hardware offers a practical degree of insulation against procurement delays.

Better visibility into asset health and more proactive maintenance are not complex or costly undertakings, yet they rarely receive the investment they merit. They represent the most practical and high-value actions available to infrastructure teams.

There is no procurement strategy that bypasses the memory shortage entirely. But the organisations taking action now should be questioning the inherited assumptions about sourcing, sweating existing assets harder, and designing for flexibility rather than efficiency. This way, they will be far better positioned to endure this period and maintain momentum in the long term.